A Guide for High Risk and Generative AI Providers

The European Union’s AI Act is a pioneering piece of legislation aimed at regulating artificial intelligence systems. As we edge closer to 2026, when most provisions of the Act will be in full force, it’s crucial for AI developers and providers worldwide to understand its implications, especially for high-risk and generative AI.

This blog delves into the essential aspects of the AI Act, focusing on its risk-based approach, enforcement schedule, and the specific obligations it places on providers of high-risk and generative AI systems.

The EU AI Act: A Risk-Based Regulatory Framework

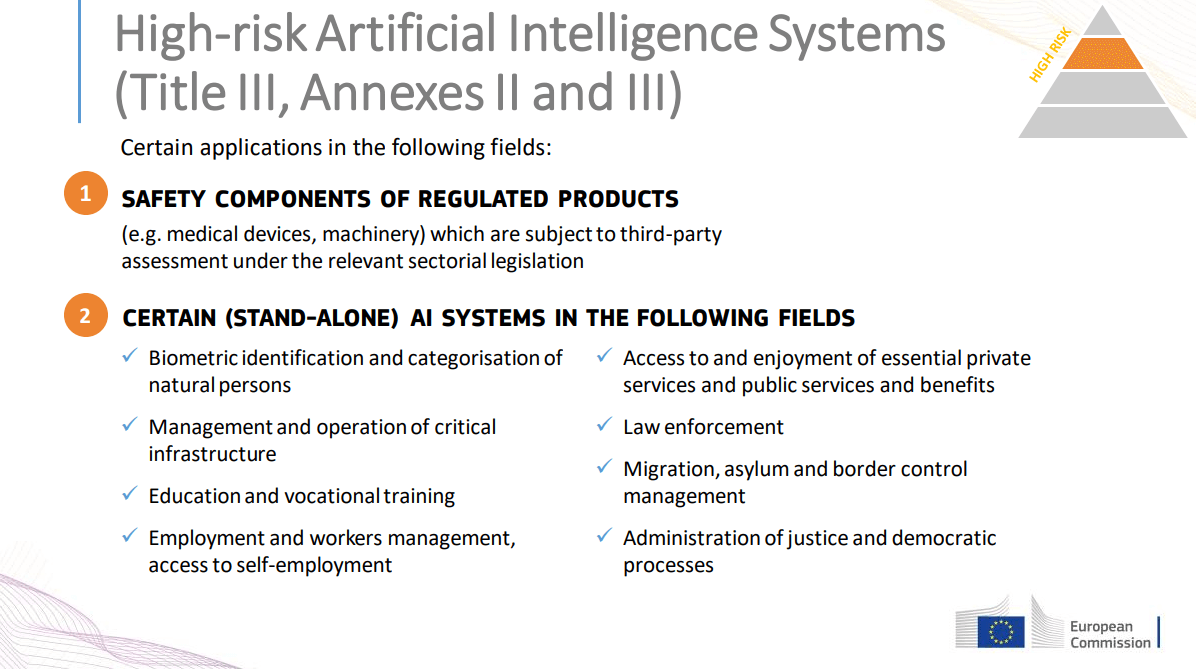

The AI Act introduces a novel, risk-based approach to AI regulation, categorizing AI systems into four risk levels: Prohibited AI, High Risk AI, Limited Risk AI, and Minimal Risk AI. This classification dictates the regulatory requirements applicable to each AI system, with the most stringent obligations targeting high-risk and generative AI that carry systemic risks.

The Timing and Phases of the EU AI Act

The EU AI Act is set to revolutionize the way artificial intelligence systems are regulated across Europe. With an anticipated full implementation by April 2026, the Act outlines a phased enforcement schedule to allow businesses and developers ample time to adapt. This timeline is critical for understanding the urgency and planning required for compliance:

- Immediate Action for Prohibited AI: Certain AI applications, deemed too risky, are to be phased out within six months of the Act’s effective date. This category includes AI systems that manipulate human behavior to circumvent users’ free will or systems allowing ‘social scoring’ by governments.

- Generative AI Regulation: Within 12 months post-effective date, specific regulations targeting generative AI will come into force, emphasizing the need for early preparation by companies developing or deploying such technologies.

Systemic Risk and Generative AI

The Act identifies systemic risk in generative AI, specifically in models trained with more than 10251025 FLOPs. Notably, OpenAI’s GPT-4 and potentially Google DeepMind’s Gemini are the initial models recognized under this criterion. The European Commission will adapt the FLOP threshold to keep pace with technological advancements, ensuring that the regulation remains relevant.

High-Risk AI: Definition and Obligations

High-risk AI systems are those that could significantly affect the safety, rights, and freedoms of individuals. These include AI systems integral to product safety, those requiring third-party conformity assessments, and specific applications listed in the Act. Providers of such AI must conduct pre-release conformity assessments, establish internal quality and risk management systems, and meet EU registration and compliance requirements.

Enforcement Schedule: A Graduated Approach

The Act’s graduated enforcement schedule underlines a progressive approach to compliance, reflecting the varying degrees of readiness and impact among AI systems:

- Prohibited AI: Immediate action with a six-month compliance window.

- High-Risk and Generative AI: Extended timelines, with generative AI regulations activating within 12 months, offering developers time to align their systems with the new legal framework.

The Cost of Non-Compliance: A Significant Consideration

The AI Act stipulates substantial penalties for non-compliance, aiming to ensure adherence and mitigate the risks associated with AI technologies. These penalties can include hefty fines, potentially scaling up to the higher of €20 million or 4% of the company’s total worldwide annual turnover for the preceding financial year, underscoring the financial risks of overlooking the Act’s requirements.

For High-Risk AI Providers

High-risk AI providers are subject to comprehensive compliance measures, including pre-release conformity assessments and the establishment of internal management systems. These requirements aim to ensure that high-risk AI systems are trustworthy, transparent, and secure before they reach the market.

For Generative AI Developers

Generative AI developers, especially those dealing with systemic risks, face stringent scrutiny. They must comply with EU copyright laws, ensure transparency, maintain detailed technical documentation, and collaborate with the European AI Office to mitigate risks and ensure cybersecurity.

Implications for Global AI Providers

The AI Act’s broad application means that any AI system available on the EU market or affecting individuals in the EU falls under its regulations, regardless of where the provider is based. This global reach necessitates a proactive approach from AI developers worldwide to understand the Act’s implications and integrate compliance measures into their development and deployment processes.

Strategies for Compliance and Risk Mitigation

AI developers and providers can take several steps to navigate the complexities of the AI Act and ensure compliance:

- Understand the Classification: Determine whether your AI system falls under the high-risk or generative AI categories and understand the specific obligations that apply.

- Assessment and Documentation: Conduct thorough assessments of your AI systems to ensure they meet the Act’s requirements for data quality, transparency, and security. Maintain comprehensive documentation of these assessments and your AI systems’ technical details.

- Establish Internal Policies: Develop and implement internal policies and procedures for quality and risk management, focusing on areas such as human oversight, accuracy, and cybersecurity.

- Engage with EU Authorities: For providers outside the EU, appointing an authorized representative within the EU can facilitate compliance and communication with European authorities.

The EU AI Act is a pioneering step towards a regulated and responsible AI future. As the 2026 enforcement deadline approaches, AI developers and providers must engage in strategic planning and proactive compliance measures.

Understanding and adhering to the Act’s risk-based regulatory framework ensures not only legal compliance but also fosters a culture of trust, safety, and innovation in AI technologies. By embracing these regulations, the AI community can contribute positively to society, ensuring that AI advancements align with European values and global standards for ethical AI use.

For more insights and tips on integrating AI into your content strategy and overall business, subscribe to our FREE newsletter and join a community of 60K+ forward-thinking creators. Subscribers will get 100 ChatGPT prompts, a FREE AI writer to go viral on social media, and Our FREE “Building A Minimum Viable Business in Record Time” Course, all for FREE. Subscribe Now.