AI for Doctors: MedGemma Reads Scans, MedASR Writes It Down

Google’s latest open-source medical AI models bring multimodal intelligence to the clinic and a $100K hackathon to the community. AI for doctors is here!

Google is pushing medical AI into its next phase. The company has just released MedGemma 1.5, a major upgrade to its open multimodal model for medical image interpretation.

Alongside it, Google is introducing MedASR, a new speech-to-text model fine-tuned specifically for medical language.

Together, these models aim to support doctors and developers with more adaptable tools for interpreting everything from CT scans to dictated clinical notes and they’re both available now on Hugging Face and Vertex AI.

📊 What’s New in MedGemma 1.5

MedGemma was built for the complexity of healthcare — a field where data comes in many forms: images, text, reports, and conversations. The 1.5 release brings significant upgrades across the board:

High-dimensional imaging: Supports full-volume CTs, MRIs, and histopathology slides

Improved accuracy:

- +3% on disease-related CT findings

- +14% on MRI findings

- Major gains in anatomical localization and lab report parsing

Lighter weight: The 4B parameter model is optimized for edge use, but scalable in the cloud

“We built MedGemma to reflect the real-world, multimodal nature of medicine — and this update brings us closer to that goal.”

— Daniel Golden, Engineering Manager, Google Research

🗣️ Meet MedASR: AI That Understands Medical Speech

While MedGemma tackles vision and text, MedASR focuses on voice. It’s a new automated speech recognition (ASR) model designed specifically for the medical domain — and it’s showing impressive results:

- 58% fewer errors than Whisper large-v3 on chest X-ray dictation, based on Google’s internal benchmarks

- 82% fewer errors fewer errors on diverse internal medical dictation tasks across specialties, also based on internal testing

Doctors can use MedASR to dictate clinical notes, while developers can integrate it to power spoken prompts for MedGemma — enabling fully voice-driven AI reasoning in clinical workflows.

💡 Real-World Impact

Since its original release, MedGemma has already made waves:

- Qmed Asia built a conversational AI interface for Malaysia’s clinical practice guidelines

- Taiwan’s National Health Insurance used MedGemma to extract key data from 30,000+ pathology reports, helping guide lung cancer treatment decisions

The new models remain free for both research and commercial use, with tutorials and support available via GitHub and Hugging Face.

Use is subject to each model’s license, and developers must ensure compliance with applicable health data regulations such as HIPAA and GDPR.

🏆 The MedGemma Impact Challenge: $100K Up for Grabs

To inspire more real-world applications, Google is launching the MedGemma Impact Challenge — a global hackathon hosted on Kaggle with $100,000 in prizes.

Developers, startups, and researchers are invited to build innovative healthcare tools using MedGemma and MedASR. The goal? Showcase how open AI models can meaningfully improve patient care.

🛠️ Get Started

MedGemma 1.5, MedASR, and the full Health AI Developer Foundations (HAI-DEF) suite are available now:

- Explore on Hugging Face

- Run on Vertex AI

- Join the MedGemma Impact Challenge

For documentation, benchmarks, and fine-tuning tutorials, visit the HAI-DEF GitHub.

Also to Read

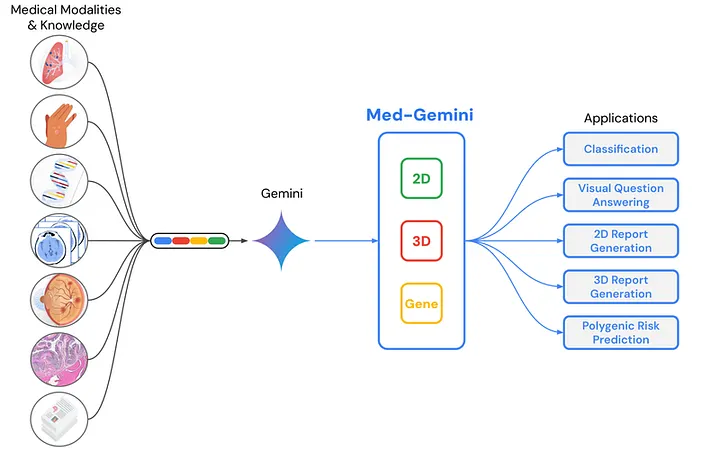

Med-Gemini: Revolutionizing Healthcare with Advanced AI Solutions

Unlocking AI’s Potential in Medicine with Med-Gemini medium.com

Discover more at the intersection of AI and healthcare with AIHealthTech Insider delivered every Monday, free to read. Click here to subscribe.